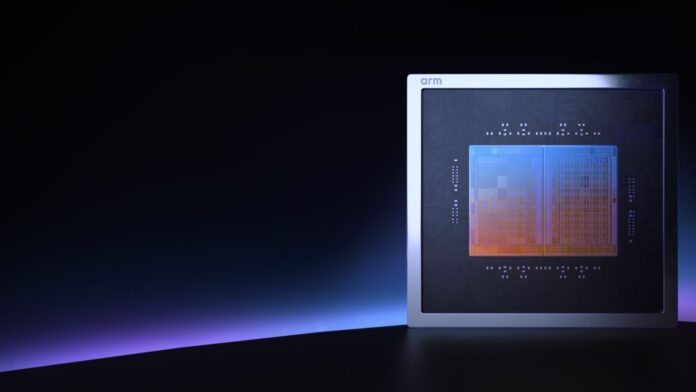

Chip designer Arm has officially entered the specialized AI hardware market with the introduction of its first in-house processor specifically engineered to power AI agents.

While current AI popularity is driven by chatbots that respond to prompts, the industry is shifting toward “agentic AI”—systems capable of taking proactive, autonomous steps to complete complex goals with minimal human supervision. Arm’s new architecture aims to provide the computational backbone necessary for this transition.

The Orchestrator: Why CPUs Matter for AI Agents

In the current AI landscape, Graphics Processing Units (GPUs) are the heavy lifters; their parallel processing power is essential for training and running Large Language Models (LLMs). However, running an autonomous agent requires more than just raw mathematical throughput. It requires decision-making, task management, and the ability to handle complex, branching logic.

This is where the Central Processing Unit (CPU) becomes critical. If a GPU is the engine of an AI system, the CPU acts as the conductor of the orchestra. It manages the flow of data, orchestrates the various accelerators, and ensures that all components work in harmony to execute the agent’s tasks.

Technical Specifications and Architecture

Arm’s new AGI CPU is built to move away from the limitations of “general-purpose” computing to focus on inference —the process of an AI model actually performing a task in real-time.

Key technical highlights include:

– Advanced Manufacturing: Built on a cutting-edge 3-nanometer process.

– High Core Density: Features up to 136 Neoverse V3 cores per chip, reaching clock speeds of 3.7 GHz.

– Memory Efficiency: Delivers a memory bandwidth of 6 GB/s per core.

– Scalable Design: The architecture allows for two chips to be packed into a single server blade (272 cores), which can then be stacked into racks of 30. A single rack can boast a massive 8,160 cores working in parallel.

Challenging the x86 Legacy

For decades, the x86 architecture (pioneered by Intel) has dominated the computing world. However, x86 chips are designed for “legacy support,” meaning they must remain compatible with a vast array of older software and diverse applications. This versatility comes at a cost of efficiency.

By contrast, Arm’s AGI CPU utilizes the Armv9.2-A architecture, which strips away much of this legacy overhead to focus strictly on AI workloads. This specialization allows for significant performance gains:

– Higher Density: Arm claims its AGI CPU delivers more than twice the performance per server rack compared to traditional x86 CPUs.

– Energy Efficiency: Leveraging Arm’s historical strength in power management—the same technology that powers most of the world’s smartphones—this chip aims to mitigate the massive energy demands expected as AI deployment scales.

The Shift from Training to Action

The semiconductor industry is witnessing a fundamental shift in focus. While the previous wave of AI development focused on training massive models, the next wave is about deployment and agency.

As AI moves from being a tool we talk to, to an agent that works for us, the demand for data-center hardware that can handle rapid, intelligent orchestration will skyrocket. Arm’s entry into this space suggests that the future of AI may depend as much on the “brains” that manage the tasks as on the “muscle” that processes the data.

Conclusion: By prioritizing specialized orchestration over general-purpose computing, Arm is positioning itself to lead the infrastructure shift required for autonomous, agentic AI to function at a global scale.